The Reasoning Trap

How AI Captured a Word and Closed a Question

The AI industry needed a word for what their systems do. They took one. They took the wrong one.

“Reasoning models.” “Reasoning tokens.” “Chain-of-thought reasoning.”

This wasn’t malice. It was speed. The market moved faster than the language could settle. By the time anyone thought to ask what “reasoning” actually means—whether silicon can do it, whether the word was even available to take—the terminology had already hardened into benchmarks, valuations, and identities.

The question closed before it opened.

What follows is not an attack on AI. It’s a diagnostic. Something was captured that shouldn’t have been. A 2,500-year-old word got flattened into a product feature, and we’re only beginning to feel what was lost in translation.

I know this because I built a system designed to prevent it—and then it caught me anyway. More on that later. First, the etymology.

I. The Distinction

Reasoning (Ratio / Logos)

The word comes from Latin ratio: reckoning, account—derived from reri, to think or calculate. In Greek, the parallel is logos: word, account, explanation. What you give when asked to justify yourself.

The core meaning isn’t computation. It’s giving an account. Being in relation. Answering to someone. Reasoning requires a who. A subject with values, accountable to others. It is dialogic, relational, grounded in deliberation over why.

The philosopher Shannon Vallor captures this when she distinguishes between systems that adhere to norms and those that “stand in the space of moral reasons.” Drawing on Wilfrid Sellars, she describes this space as “the realm where we examine and justify our beliefs to one another.” This is a fundamentally dialogic activity. A system that cannot enter this space, that cannot articulate and negotiate reasons with us, is doing something other than reasoning, however sophisticated its outputs.

This isn’t an indictment of tool use. Calculators, databases, and search engines all support reasoning without claiming to perform it. When you use a calculator, you remain the reasoner; the tool is transparently inferential. The issue arises when the tool is named as if it reasons. This isn’t semantics for the sake of it; language matters because the naming reshapes the human’s relationship to the cognitive work.

Inference (Inferre)

From Latin in- (into) + ferre (to carry, bring). Literal meaning: to carry in, to bring forward.

The core meaning is movement from A to B—transporting premises to conclusions. Inference is procedural, directional, mechanical. It requires only inputs and outputs. No subject necessary.

Inference is what a thermostat does. What a calculator does. What a sorting algorithm does. It carries information from here to there according to rules. This is not a criticism. Inference is powerful. Inference is essential. But inference doesn’t answer to anyone. It doesn’t give an account. It doesn’t hold values it can be questioned about.

Reasoning does. Reasoning is what happens when someone asks “why did you do that?” Humans answer this not by pointing to our inputs, but by explaining what mattered to us and why.

If you had to put it on a bumper sticker: inference is procedural, reasoning is relational.

The Order of Operations

Reasoning establishes the foundation: what matters, what constraints apply, to whom one is accountable. Inference carries forward from those grounds. The human reasons first. Then inference can happen. Without prior reasoning, inference is just pattern-matching on unexamined inputs.

II. The Closure

Here’s how a question gets closed before it opens.

First, a system produces outputs that look like the thing. Not the thing itself—the appearance of it. Chain-of-thought explanations. Step-by-step derivations. Tokens that seem to deliberate. The form is there. The form is seductive. The form is enough to need a name.

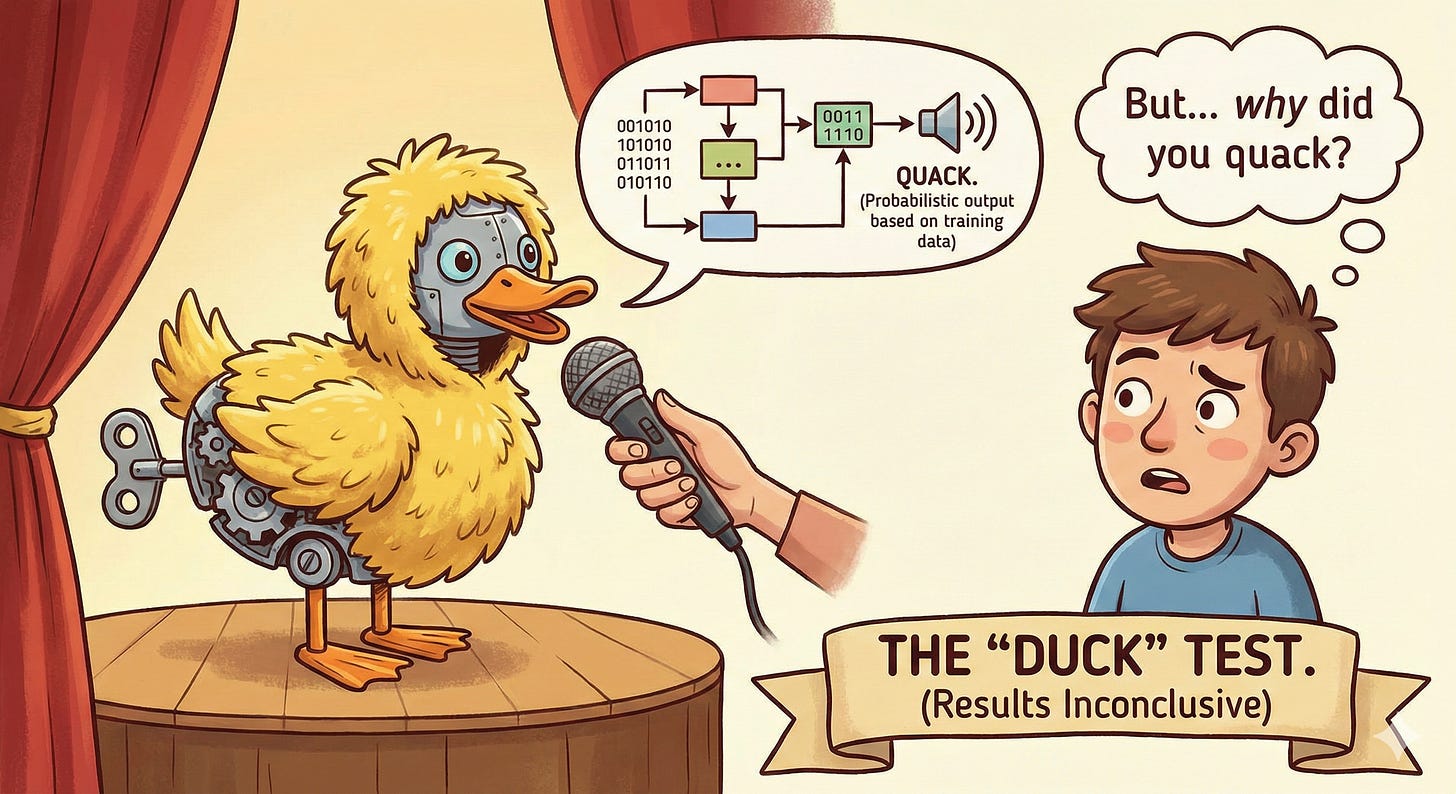

Emily Bender and her colleagues named this phenomenon in 2021: “stochastic parrots.” Large language models, they argued, “stitch together linguistic forms in a pattern-based manner” without understanding. The system learns sequences and patterns, not meaning. Form, not substance. The critique was sharp, but the terminology had already begun to harden in the other direction.

If it walks like a duck and quacks like a duck, we call it a duck. But can it give an account of why it quacked?

Second, the name gets chosen under pressure. Not the pressure of inquiry. The pressure of shipping.

Marketing needs language by Q3.

The paper needs a title before the conference deadline.

“Inference” is accurate but flat. “Pattern-matching” is precise but deflationary. “Reasoning” is available and it lands. It makes the outputs legible. It makes the product sell. It makes the research feel important.

Third, the name becomes load-bearing. Benchmarks get built around it. “Reasoning capabilities” becomes a metric. Papers cite papers that used the term. Investment theses depend on the word meaning what the deck says it means. Researchers’ identities crystallize: I build reasoning systems. The terminology isn’t just descriptive anymore. It’s structural. It’s holding things up.

Fourth, the name becomes unquestionable. Not because anyone decided it was settled. Because questioning it now costs too much. To ask:

“wait, is this actually reasoning?”

is to threaten the benchmark, the valuation, the identity, the paper, the product, the deck. The question doesn’t get suppressed. It gets priced out. It becomes professionally irrational to ask.

And so the question that should have come first:

“what is reasoning, and can machines do it?”

becomes the question that cannot be asked at all.

This is premature epistemic closure. Not a conspiracy. Not malice. Just speed exceeding comprehension, and terminology hardening before the inquiry could happen. The word got captured. The question got closed. And now we’re building civilizational infrastructure on a semantic foundation that was never examined.

We confused the carriage with the driver. We saw a carriage moving incredibly fast and assumed there must be a brilliant driver inside making smart decisions. But there’s no driver. It’s just a runaway carriage on a very sophisticated track.

Critics have tried to reopen it. Gary Marcus has persistently argued that LLMs “operate fundamentally on pattern recognition rather than true reasoning,” comparing their outputs to a game of Mad Libs—language assembled from statistical patterns rather than logical coherence. Melanie Mitchell has examined paper after paper claiming reasoning breakthroughs, finding that performance collapses when problems are varied even superficially. The debate is live. But the terminology has already shipped.

The philosophers never settled whether reasoning requires a subject—a who that can be held accountable, that can give an account, that can answer “why” to another person. The cognitive scientists never settled whether what LLMs do is qualitatively different from reasoning or merely quantitatively worse at it. The ethicists never deliberated whether we should call it reasoning even if we could—whether the naming itself would erode something worth protecting.

None of these questions got answered. They got skipped. The train left the station while the engineers were still debating whether the bridge was finished.

And now we’re mid-crossing, and the word “reasoning” is one of the cables holding the whole thing up, and everyone on board can feel the sway but no one wants to look down.

III. The Consequences

For Individuals

Cognitive atrophy at scale. The muscle of reasoning doesn’t develop if the load is always carried by inference. People learn to evaluate outputs but not to generate the underlying structure. When the tool is wrong, manipulated, or unavailable—nothing to fall back on.

This pattern isn’t new. Research on GPS use shows measurable decline in spatial reasoning among habitual users. Studies on search engines document reduced memory encoding when people expect information to remain externally accessible—what researchers call the “Google effect.” The tool that carries the load atrophies the muscle that would otherwise carry it. AI is simply the most powerful instance of this dynamic we’ve yet encountered—and the first where the tool is named as if it performs the very capacity it’s eroding.

A generation of knowledge workers is entering the workforce having never written from the blank page. They can critique AI output, edit it, prompt it to improve—but they cannot generate the architecture from scratch. They have become editors of inference, not generators of reason.

For Society

Epistemic dependence on systems with no accountability. “Reasoning” becomes something systems do, not something humans owe each other. The dialogic, relational core of logos is lost. Accountability dissolves—who gave the account if no one reasoned?

We aren’t getting better decisions. We’re getting orphan decisions—decisions with no parents, no one to answer for them. We are automating the bureaucracy of “I was just following orders”—but the order-giver is a statistical model with no body, no reputation, and no conscience.

For Enterprises

The explainability crisis isn’t a technical problem. It’s an architectural one.

When a customer asks “why did the model say this?”, the enterprise has nothing to point to—not because the system is a black box, but because no one was ever in the position of giving an account. The human was downstream of the output, not upstream of the reasoning. XAI tools can visualize attention weights and token probabilities, but they cannot produce an account that was never rendered.

Consider a financial services firm that deploys an AI to explain loan denials to customers. The system generates fluent, confident explanations. But when a denial is challenged and the compliance team asks “why did it say this?”, no one can answer. The model produced an explanation, but no one reasoned toward it. There’s no constraint document, no deliberation artifact, no human who can say “I weighed these factors because of these values.” The explanation was inference all the way down—pattern-matched from training data, not derived from accountable judgment.

We assumed someone, somewhere, stood in the space of moral reasons and gave an account. But when we looked for them, the room was empty.

This is the gap the entire governance industry is dancing around. We've built beautiful architectures—audit trails, compliance dashboards, interpretability tools, risk frameworks. But architecture needs a foundation. And the foundation is reasoning. Not inference. Not process. A human in the chair who can answer why.

Governance as architecture is necessary. But without reasoning, the architecture floats in air. The chair it was built around was never occupied. Every framework assumes someone upstream did the work. But if the sequence is prompt → inference → output → human review, then the human is downstream. They’re in the audience, not in the chair. And when the regulator, the customer, the board asks “who is accountable?”—everyone turns to an empty seat.

The philosophers John Symons and Ramón Alvarado have shown why this matters epistemologically. Epistemic warrant—the justification that makes a belief count as knowledge—does not transfer automatically from one process to another. Having good reason to trust the training data, or the engineering team, or the underlying method, does not automatically grant reason to trust the output. Warrant must be established, not inherited. And inference alone cannot establish it.

This is why enterprises are spending millions on interpretability dashboards and audit trails that never quite satisfy regulators, customers, or their own legal teams. The tools are answering the wrong question. They’re asking “how did the model produce this output?” when the customer is asking “why should I trust this?” The first question has a technical answer. The second requires someone who reasoned—and if inference is all that happened, there’s no one to point to. There’s nothing to explain. Only patterns to describe.

For AI Development

Alignment gets framed as a technical problem rather than a relational one. We talk about “aligning reasoning systems” when the systems don’t reason. The human layer gets treated as a bottleneck to remove, not a capability to preserve. We optimize for speed when the need is for slowing down.

IV. The Confession

Here’s the part I didn’t expect to write: my own system caught me.

I spent nearly a year working twelve-hour days building AI governance frameworks. I thought I understood the problem. I thought I was solving the asymmetry. Then my own methodology—the one that forces you to slow down and front-load the reasoning before the system executes—turned its lens on me.

And it was uncomfortable. Not intellectually. Physically. Like a January resolutioner returning to the gym after a year away, discovering muscles they forgot existed. Aching in places they didn’t know could ache.

Small but critical cognitive muscles had atrophied. In me. The person who designed the system. Who understood the theory. Who has a decade of recovery work and knows you can’t shortcut integration.

If it happened to me, it’s happening to everyone. The difference is: most people don’t have a system that forces them to notice. They just keep prompting, keep getting outputs, keep feeling vaguely productive while the capacity to reason quietly erodes underneath.

I’m not writing this from a position of having it figured out. I’m writing it from a position of having been caught by my own design—and being grateful for it.

V. The Alternative

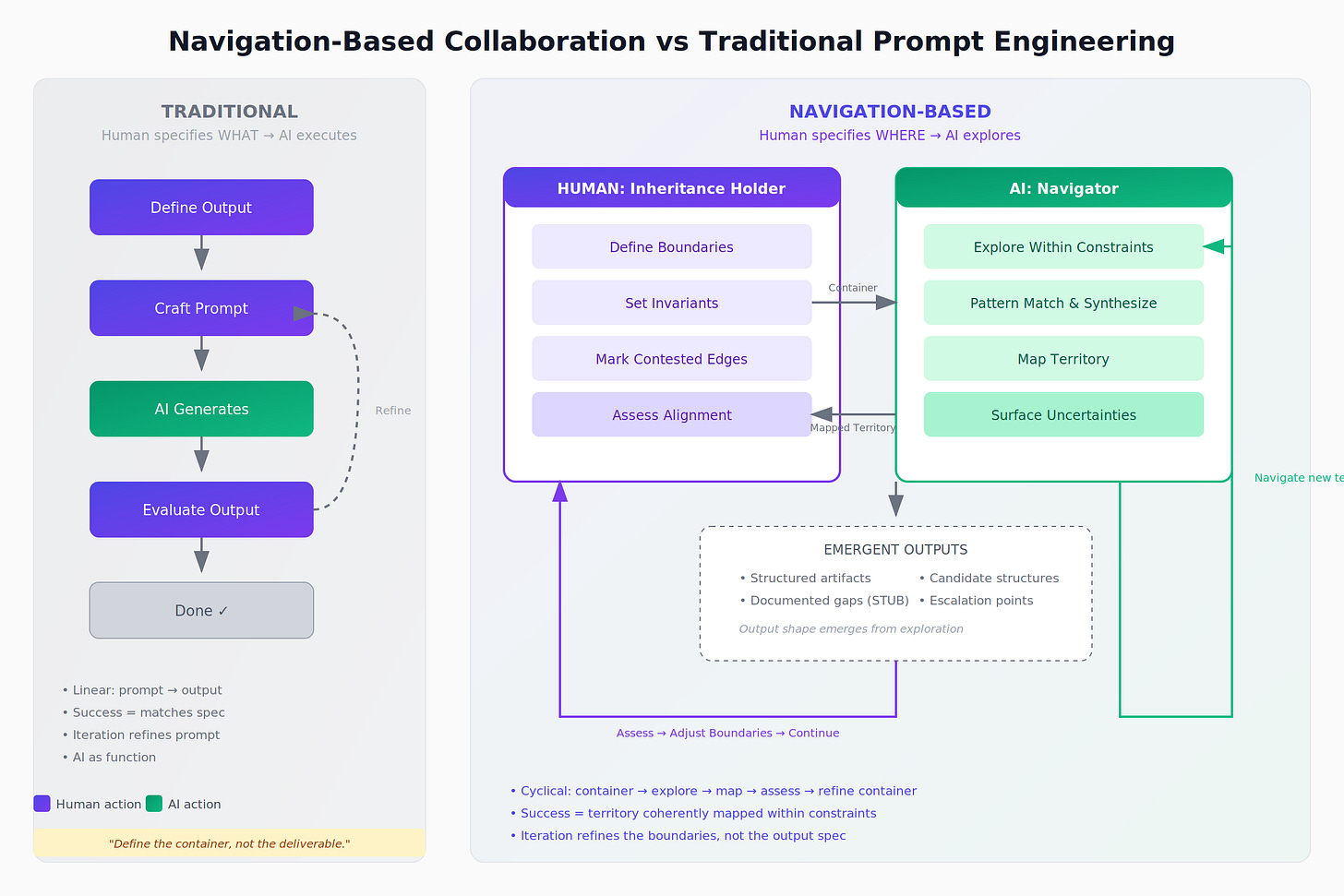

The Proper Order

Human reasons first—establishes grounds, values, constraints. AI infers within that structure. Human remains the source of the account—the one who can answer “why.”

This isn’t just philosophy. It’s architecture. When the customer asks “why?”, you point to the reasoning layer the human produced before the inference happened. The account exists because someone was accountable. Explainability becomes possible because explanation was built into the sequence from the start. Epistemic warrant is established, not assumed.

Reasoning isn’t a nice-to-have layer in your governance stack. It’s the basement. It’s the foundation everything else rests on. Without it, your compliance framework is floating. Your audit trail documents nothing but inference. Your interpretability dashboard shows you how the model moved from A to B—but no one can tell you why B was the right destination, or who decided A was the right starting point. The chair has to be occupied before the system runs, not after.

A Concrete Example

You need to write a difficult email. A client wants a refund; your policy says no.

The atrophied way: you open the chatbot, type “client is mad, write a polite but firm email saying no refunds,” and copy-paste whatever it generates. Three seconds later, you have a perfectly worded email. You’ve done zero reasoning. You have no idea if the tone is right for this specific relationship. You haven’t considered creative exceptions to the policy. You haven’t owned the difficult message of no. You just followed the blue line.

The navigation way: before you touch the AI, you identify three things. Values: Do I prioritize strict contract adherence or long-term relationship? I decide I value fairness, but also the integrity of the agreement we both signed. Invariants: No full cash refund. No apologizing for work quality—the work was good. Credit for future services is on the table. Accountability: I’m answering to my boss, who approves financial decisions, and to the client as a human being who feels wronged.

Only then do you go to the AI—not with “write an email,” but with constraints: “Draft three variations. Tone must be firm on policy but empathetic to their frustration. Do not apologize for work quality. Offer future credit as compromise.”

You reasoned. You set the grounds. The AI inferred within them. If the client calls screaming, you know exactly why you said what you said. You can stand in the space of reasons. You can give an account.

It’s more work. That’s the point. That’s the price of sovereignty.

Navigation Methodology

Front-load the encounter with deliberate reasoning over values. Invariants, boundaries, contested edges—before the AI executes. The human does the work first. The interface becomes a forcing function for human sovereignty.

What happens when humans can’t keep up? The system slows down. This is not failure. This is governance. Designed for AI in relation to humans, not AI routing around humans. In a world obsessed with friction-free speed, we need to artificially reintroduce healthy friction. Cognitive speed bumps. The reasoning muscle burns when you use it—that’s how you know it’s working.

Everyone else is optimizing for speed. The actual need is for infrastructure that keeps humans capable of being in the loop. You can’t 10x reasoning. You can’t automate accountability.

VI. The Off-Ramp

This is not an indictment. It’s an invitation.

If you’re building AI and something feels off—this is what’s off. The terminology captured a word it shouldn’t have. The question got closed before it opened. You’re not crazy for feeling the dissonance.

If you’re using AI and you sense you’re losing something—this is what you’re losing. The capacity to give an account. The muscle that only develops when you do the work first.

If you’re in a boardroom wondering how we got here—this is how. Speed outpaced comprehension. The market named the thing before anyone understood it. And now the name is load-bearing and no one knows how to question it without threatening the whole structure.

If you’re an enterprise struggling to explain your AI to customers, regulators, or your own board—this is why. You’re trying to produce an account from a system that never reasoned. The architecture made explanation impossible before the first token was generated.

If you’re building governance frameworks and wondering why they never quite satisfy anyone—this is why. You’re building architecture without a foundation. The chair at the center of your framework is empty. Someone has to sit in it before the system runs, not after.

Here’s the off-ramp:

The order matters. Humans reason. Systems infer.

That’s not anti-AI. That’s pro-human-sovereignty. It’s the design principle that lets AI be genuinely useful without making us smaller.

This isn’t about rejecting AI. It’s about putting it in its proper place—downstream of human reasoning, not upstream of it. The systems are useful precisely because they’re good at inference. Let them do what they do. Just don’t let them do what they can’t.

You can build this way. I have. The systems slow down when humans can’t keep up. The interfaces force humans to do the cognitive work first. The governance structures treat human limitation as a design constraint, not a bug to be patched.

None of this requires abandoning AI. It requires putting it in proper relation.

The question was closed. It can be reopened. We just have to slow down long enough to ask it.

Retire “human in the loop.” The human IS the loop. AI just drives on it.

Because a society that cannot reason is a society that can only obey. It can only follow the blue line wherever it leads. And I don’t know about you, but I like having some say in where I’m going.

References

Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell. 2021. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? 🦜. In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ‘21). Association for Computing Machinery, New York, NY, USA, 610–623

Marcus, G. (2024). A knockout blow for LLMs. Gary Marcus Substack.

Mitchell, M. (2024). The LLM reasoning debate heats up. AI Guide Substack.

Sparrow, B., Liu, J., & Wegner, D. M. (2011). Google effects on memory: cognitive consequences of having information at our fingertips. Science (New York, N.Y.), 333(6043), 776–778.

Symons, J., & Alvarado, R. (2019). Epistemic entitlements and the practice of computer simulation. Minds and Machines, 29(1), 37–60.

Vallor, Shannon, Technology and the Virtues: A Philosophical Guide to a Future Worth Wanting (New York, 2016; online edn, Oxford Academic, 22 Sept. 2016)

About the Author

Nelson Spence is the founder and Principal Researcher at Project Navi LLC, an AI governance and safety company developing frameworks for human-AI collaboration. His work applies insights from seven years in behavioral health and peer support to the challenge of AI alignment—treating both human burnout and AI hallucination as symptoms of coerced coherence without adequate infrastructure. He builds systems designed for AI in relation to humans, not AI routing around them.